-

Schaden & Unfall

Schaden & Unfall ÜberblickRückversicherungslösungenTrending Topic

Schaden & Unfall

Wir bieten eine umfassende Palette von Rückversicherungslösungen verbunden mit der Expertise eines kompetenten Underwritingteams.

-

Leben & Kranken

Leben & Kranken ÜberblickUnsere AngeboteUnderwritingTraining & Events

Leben & Kranken

Wir bieten eine umfassende Palette von Rückversicherungsprodukten und das Fachwissen unseres qualifizierten Rückversicherungsteams.

-

Unsere Expertise

Unsere Expertise ÜberblickUnsere Expertise

Knowledge Center

Unser globales Expertenteam teilt hier sein Wissen zu aktuellen Themen der Versicherungsbranche.

-

Über uns

Über uns ÜberblickCorporate InformationESG bei der Gen Re

Über uns

Die Gen Re unterstützt Versicherungsunternehmen mit maßgeschneiderten Rückversicherungslösungen in den Bereichen Leben & Kranken und Schaden & Unfall.

- Careers Careers

Decision-Making in the Age of Generative Artificial Intelligence

2. April 2025

Frank Schmid

English

Deutsch

Generative artificial intelligence (AI) was recognized with two Nobel Prizes in 2024, a remarkable achievement given the technology’s youth. The Deep Learning revolution that enabled generative AI began in 2012 when a deep neural network called AlexNet won the ImageNet computer vision challenge. Geoffrey Hinton of the University of Toronto, who led the team, was awarded the Nobel Prize in Physics alongside John Hopfield, a fellow contributor to the algorithmic foundations of artificial intelligence.1

The Nobel Prize in Chemistry was awarded to three researchers who applied generative AI to predict the three-dimensional structure of proteins. Among the recipients was Demis Hassabis, whose team at Google DeepMind developed AlphaFold, a deep learning system that determined with high accuracy the structure of all 200 million known proteins. It was a short time from AlphaFold 2 winning the protein structure prediction challenge CASP14 in 20202 and the publication of the AlphaFold Protein Structure Database in 20213 before the Nobel Prize Committee recognized this work.

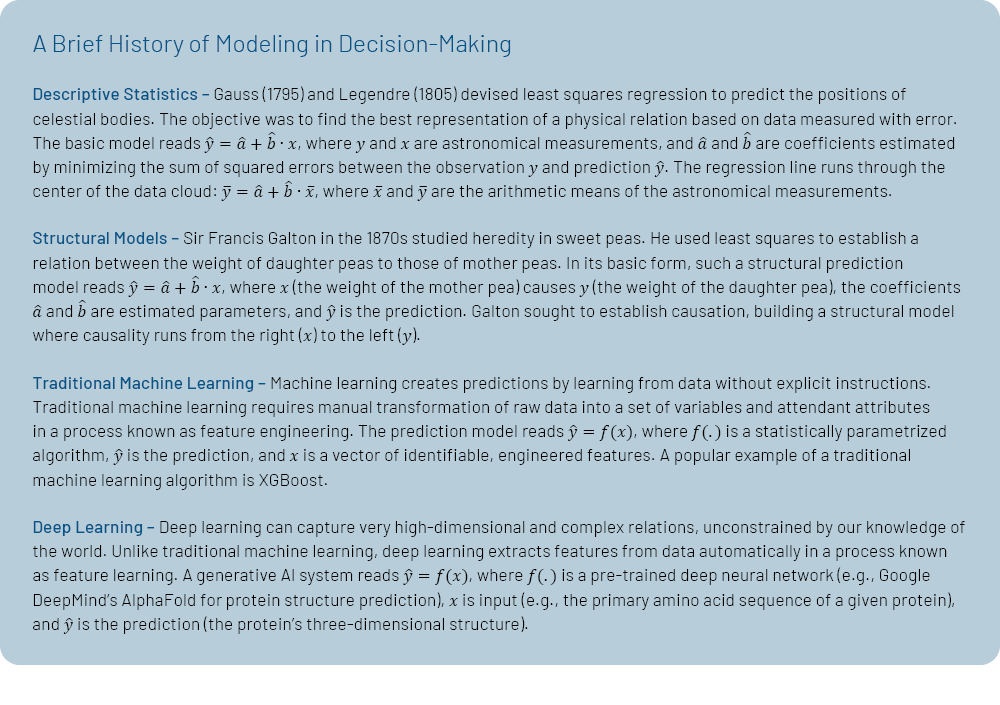

From Modeling Causation to Establishing Connections

Quantification has long been inspired by Newtonian mechanics, which emerged in the 17th century and laid the groundwork for new quantitative methods. In their quest to describe the movements of celestial bodies from imperfectly measured observations, Carl Friedrich Gauss and Adrien-Marie Legendre developed least squares regression around the turn of the 19th century.4 It wasn’t until Sir Francis Galton studied biological inheritance in the 1870s that least squares regression was used to quantify causal relations.5 These structural regression models of hypothesized causal effects have long been the backbone of predictive modeling in insurance, especially with the rise of generalized linear models (GLMs) in the 1980s.

The advent of machine learning has challenged the dominance of structural models in predictive modeling. Structural models generate predictions based on quantification of manually specified relations, and these relations are typically interpreted as causal. Causal interpretation is associated with the stability of the estimated relation, a link that may be stronger in the physical world than in social and economic settings. Machine learning, on the other hand, is agnostic to causal relations and makes predictions solely based on connections.6

In regression analysis, the number of explanatory variables cannot exceed the number of observations, and the functional form of their influences must be manually specified.7 In contrast, machine learning is not constrained by the number of variables that can contribute to the prediction, and the form of these contributions does not need to be specified in advance. Traditional machine learning relies on humans to extract the attributes that represent the raw data before processing by the algorithm.8 Deep learning, however, performs automatic feature extraction, reducing the need for human intervention.9

From Averaging Over Existing Data to Creating New Data

At their core, deep learning systems like OpenAI’s GPT‑4 for text generation and DALL‑E 3 for image generation create content by averaging over existing data, a method rooted in regression models. Gauss and Legendre developed ordinary least squares to draw a line through the center of a data cloud, where the center is defined by the arithmetic means of the variables on both sides of the regression equation. Besides the mean, other important statistical averages include the median (the central value of ordered data) and the mode (the most likely value).

In conventional text generation, a large language model predicts the next word by invoking the mode, choosing the word most likely to occur in the given context. Similarly, when an image generation model is asked to predict a cat, the system generates an “average cat.”10

Reasoning capabilities extend the functionalities of generative AI models beyond merely averaging over existing data to generating new data. To build intuition, let’s consider a popular syllogistic argument:

All men are mortal.

Socrates is a man.

Therefore, Socrates is mortal.11

The first two lines are premesis, which we can consider as existing data. The third line, a conclusion, is derived through reasoning, representing new data.

AlphaFold 2, in predicting the 3D structures of proteins, incorporates elements that parallel human spatial reasoning.12 There are also mathematical reasoning models, most notably Google DeepMind’s AlphaProof and AlphaGeometry 2. These two models together solved four out of six problems at the 2024 International Mathematical Olympiad (IMO), achieving a score in the high end of the silver medal category.13 Among large language models with advanced reasoning capabilities are OpenAI’s o3 and DeepSeek’s R1.

Generative AI as an Abstraction Layer

The predictive power of deep learning has significant implications for the role of quantitative methods in decision-making. For example, AlphaFold solved the 50‑year-old problem of protein folding without understanding the folding process itself.14 Similarly, the Artificial Intelligence Forecasting System (AIFS), a deep learning system for weather forecasting introduced by the European Centre for Medium-Range Weather Forecasts (ECMWF) in February 2025, outperforms the most accurate physics-based models for many target variables without understanding meteorology.15

Just as these generative AI systems lack an understanding of biology or physics, we do not fully comprehend how these models arrive at their predictions.16 Deep learning systems provide predictions without the opportunity for causal interpretation (“why”)17 and related counterfactual inspection (“what if”). However, causal interpretation is crucial for forming narratives, which help us organize information and communicate it coherently. Narrative plays a key role in corporate decision‑making.18

Generative AI can be viewed as an abstraction layer. In software engineering, abstraction layers are designed to hide the inner workings of subsystems. Also in science, abstraction layers simplify complex systems. For instance, chemistry serves as an abstraction layer over physics, allowing us to understand chemical processes without fully grasping the underlying physics.19

Consider how we drive motor vehicles without fully understanding the physics that powers them. When we learned to drive, we formed an abstraction layer. We verify this abstraction based on predictive benefits. For example, we predict that stepping on the accelerator will make the car move faster, and stepping on the brakes will slow it down. When driving an unfamiliar car, our predictions may be slightly off, and we adjust our abstraction accordingly.

Conclusion

The Nobel Prizes for AI marked a milestone, not only in scientific discovery but also in decision-making. Generative AI systems provide powerful predictions that resist causal interpretation and the formation of accompanying narratives. These systems require us to abstract from the underlying causal forces and focus on verifying the predictive benefits. Abstraction is a well-known concept in both science and everyday life.

- See The Nobel Foundation, “Nobel Prizes 2024,” https://www.nobelprize.org/all-nobel-prizes-2024/.

- See Critical Assessment of Techniques for Protein Structure Prediction (CASP), “14th Community Wide Experiment on the Critical Assessment of Techniques for Protein Structure Prediction,” https://predictioncenter.org/casp14/.

- See Google DeepMind, “AlphaFold: Accelerating breakthroughs in biology with AI,” https://deepmind.google/technologies/alphafold/.

- See Stephen M. Stigler, “Gauss and the Invention of Least Squares,” Annals of Statistics 9(3), 465‑474, 1981, https://projecteuclid.org/journals/annals-of-statistics/volume-9/issue-3/Gauss-and-the-Invention-of-Least-Squares/10.1214/aos/1176345451.full.

- See Jeffrey M. Stanton, “Galton, Pearson, and the Peas: A Brief History of Linear Regression for Statistics Instructors,” Journal of Statistics Education 9(3), 2017, https://www.tandfonline.com/doi/full/10.1080/10691898.2001.11910537.

- Causal inference using machine learning is possible using a technique known as double/debiased machine learning. See Victor Chernozhukov, Denis Chetverikov, Mert Demirer, Esther Duflo, Christian Hansen, Whitney K. Newey, and James Robins, “Double/Debiased Machine Learning for Treatment and Structural Parameters,” Econometrics Journal 21(1), C1‑C68, 2018, Working Paper (November 2024) at https://papers.ssrn.com/sol3/papers.cfm?abstract_id=2999543. For an application to LASSO (Least Absolute Shrinkage and Selection Operator) regression, see Alexandre Belloni, Victor Chernozhukov, and Christian Hansen, “Double/Debiased/Neyman Machine Learning of Treatment Effects,” Journal of Economic Perspectives 28(2), 29‑50, 2014, https://pubs.aeaweb.org/doi/pdfplus/10.1257/jep.28.2.29.

- Advanced regression models, such as change-point specifications and approaches to stochastic variable selection allow for the functional form to be determined endogenously, albeit in a narrowly defined way.

- An example of feature engineering for a structured data set is the inclusion of differences to group means for continuous variables, such as income. Groups may be defined by sex, age, location, etc. Another example is the inclusion of attributes for location. There are scores of location-related attributes, such as rural vs. metropolitan, distance from the coast, distance to the equator (for seasonal variation), etc. Even for a limited number of raw data items, engineering can generate many more features than a structural regression equation can include as explanatory variables.

- Traditional machine learning remains relevant despite advances in deep learning. Traditional machine learning methods are particularly valuable where interpretability is important, computational resources are limited, or when working with structured data that do not require the complex pattern recognition capabilities of deep learning.

- See Financial Times, “FT Interview: Demis Hassabis on AI Development,” January 22, 2025, https://www.ft.com/video/083aef10-60e1-45ed-95f4-3c33a2e39349.

- See John Stuart Mill, A System of Logic, Ratiocinative and Inductive: Being a Connected View of the Principles of Evidence, and the Methods of Scientific Investigation, Volume 1, 1843, London: J.W. Parker, page 245, https://archive.org/details/systemoflogicrat01millrich/page/244/.

- See Yiran Jiang, “Parallels Between AlphaFold’s Neural Architecture and Human Spatial Reasoning,” Dartmouth Undergraduate Journal of Science, December 17, 2024, https://sites.dartmouth.edu/dujs/2024/12/17/parallels-between-alphafolds-neural-architecture-and-human-spatial-reasoning/.

- See Google DeepMind, July 25, 2024, “AI achieves silver-medal standard solving International Mathematical Olympiad problems,” https://deepmind.google/discover/blog/ai-solves-imo-problems-at-silver-medal-level/.

- See European Molecular Biology Laboratory: European Bioinformatics Institute (EMBL‑EBI), “AlphaFold: A practical guide: What is the protein folding problem?” https://www.ebi.ac.uk/training/online/courses/alphafold/an-introductory-guide-to-its-strengths-and-limitations/what-is-the-protein-folding-problem/.

- See European Centre for Medium-range Weather Forecasts (ECMWF), “ECMWF’s AI forecasts become operational,” February 25, 2025, https://www.ecmwf.int/en/about/media-centre/news/2025/ecmwfs-ai-forecasts-become-operational.

- See Madhumita Murgia, “Google DeepMind’s Demis Hassabis on his Nobel Prize: ‘It feels like a watershed moment for AI’,” Financial Times, October 21, 2024, https://www.ft.com/content/72d2c2b1-493b-4520-ae10-41c1a7f3b7e4.

- Ibid.

- See Ellen S. O’Connor, “Telling decisions: The role of narrative in organizational decision making,” in: Zur Shapira (ed.) Organizational Decision Making, Cambridge University Press, Chapter 14, 304‑323, 1997.

- See Madhumita Murgia, op. cit.